简而言之,在 notebook 的开篇介绍了 knn 算法的工作原理和简要思路,这在先前的文章——机器学习算法笔记(一):k近邻算法(kNN)初探中已经有了介绍,这里就不再重复。在 cs231n/classifiers/k_nearest_neighbor.py 中直接来看要求我们完成代码的部分,首先让我们填空完成三种运算距离的函数:

第一个函数是用两个循环,来计算矩阵 X 的每一行所对应的行向量与矩阵 self.X_train 每一行所对应的行向量的 L2 距离(即我们最熟悉的欧氏距离),并将结果存在 dists 这个 num_test * num_train 的矩阵中,两层循环就是逐一遍历两个矩阵的行向量进行距离的计算。

def compute_distances_two_loops(self, X):

"""

Compute the distance between each test point in X and each training point

in self.X_train using a nested loop over both the training data and the

test data.

Inputs:

- X: A numpy array of shape (num_test, D) containing test data.

Returns:

- dists: A numpy array of shape (num_test, num_train) where dists[i, j]

is the Euclidean distance between the ith test point and the jth training

point.

"""

num_test = X.shape[0]

num_train = self.X_train.shape[0]

dists = np.zeros((num_test, num_train))

for i in range(num_test):

for j in range(num_train):

#####################################################################

# TODO: #

# Compute the l2 distance between the ith test point and the jth #

# training point, and store the result in dists[i, j]. You should #

# not use a loop over dimension, nor use np.linalg.norm(). #

#####################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

dists[i, j] = np.sqrt(np.sum((X[i, :] - self.X_train[j, :]) ** 2))

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

return dists

上面题干的第 17行中,dists = np.zeros((num_test, num_train))不能写为 dists = np.zeros(num_test, num_train),这是因为 np.zeros()函数有两个参数,第一个参数表示生成矩阵的形状(shape),第二个参数表示生成的矩阵中的数据类型(只是本例中省略掉了)。例如,若我们要生成一个5×2的矩阵,如果只写 np.zeros(5, 2)就代表 shape=5,dtype=2,这是显然错误的。而(5, 2)这个元组整体才是代表生成矩阵形状是 5×2。

第二个函数也是计算距离,但是只能用一层循环。在这边我们就用到了 numpy 的广播机制——即一个向量与相同维数的矩阵做加减乘除运算时,向量会“扩增”至与矩阵行数相同进行运算。接着,把得到的矩阵每一行沿着列的方向求和(即设置 np.sum 属性 axis = 1,求出每一行的和),将得到的结果向量开方即可。

def compute_distances_one_loop(self, X):

"""

Compute the distance between each test point in X and each training point

in self.X_train using a single loop over the test data.

Input / Output: Same as compute_distances_two_loops

"""

num_test = X.shape[0]

num_train = self.X_train.shape[0]

dists = np.zeros((num_test, num_train))

for i in range(num_test):

#######################################################################

# TODO: #

# Compute the l2 distance between the ith test point and all training #

# points, and store the result in dists[i, :]. #

# Do not use np.linalg.norm(). #

#######################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

dists[i, :] = np.sqrt(np.sum((X[i, :] - self.X_train) ** 2, axis = 1))

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

return dists

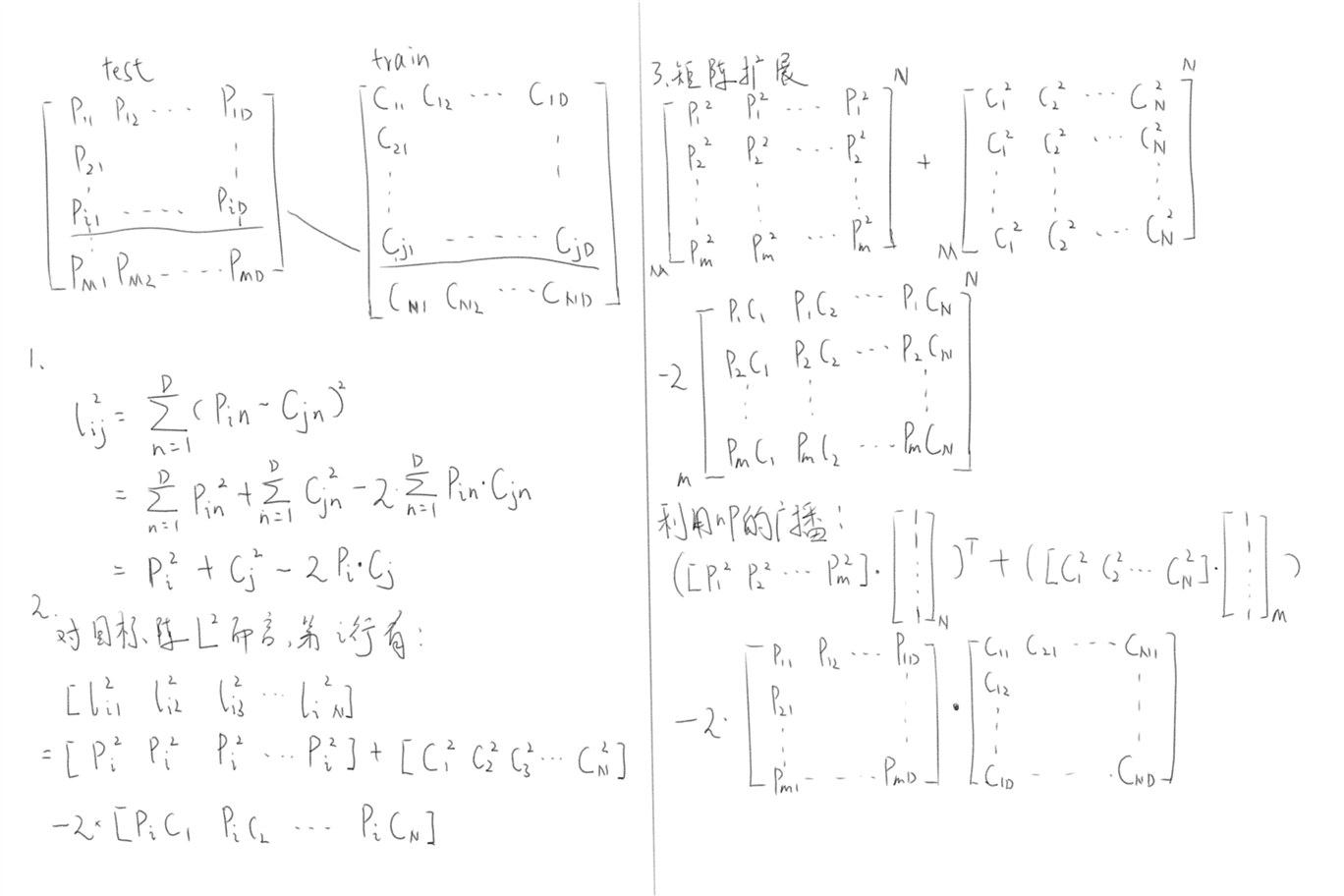

第三个函数要求不用循环来计算距离,基于 L2 距离的平方计算公式 (a-b)2 = a2+b2-2ab,与 numpy 的广播机制,可以得到下面的推导:

从而得到如下的实现方式:

def compute_distances_no_loops(self, X):

"""

Compute the distance between each test point in X and each training point

in self.X_train using no explicit loops.

Input / Output: Same as compute_distances_two_loops

"""

num_test = X.shape[0]

num_train = self.X_train.shape[0]

dists = np.zeros((num_test, num_train))

#########################################################################

# TODO: #

# Compute the l2 distance between all test points and all training #

# points without using any explicit loops, and store the result in #

# dists. #

# #

# You should implement this function using only basic array operations; #

# in particular you should not use functions from scipy, #

# nor use np.linalg.norm(). #

# #

# HINT: Try to formulate the l2 distance using matrix multiplication #

# and two broadcast sums. #

#########################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

r1 = (np.sum(np.square(X), axis = 1)*(np.ones((num_train, 1)))).T

r2 = np.sum(np.square(self.X_train), axis=1)*(np.ones((num_test, 1)))

r3 = -2*np.dot(X, self.X_train.T)

dists = np.sqrt(r1+r2+r3)

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

return dists

r1、r2、r3 其实就是把完全平方展开了,不过这边是矩阵的完全平方而已。

接下来是完成预测函数。首先要找到对一个行向量来说,test 矩阵距离它最近的前 k 个向量(好在之前所有的距离信息已经都存在 dists 矩阵中了);再对这些向量的 label 进行投票,取出现次数最多的 label 作为这个 test 向量 label 的预测结果;最后用一个 for 循环(题干已经给出)对整个 test 集所有向量执行以上步骤。

def predict_labels(self, dists, k=1):

"""

Given a matrix of distances between test points and training points,

predict a label for each test point.

Inputs:

- dists: A numpy array of shape (num_test, num_train) where dists[i, j]

gives the distance betwen the ith test point and the jth training point.

Returns:

- y: A numpy array of shape (num_test,) containing predicted labels for the

test data, where y[i] is the predicted label for the test point X[i].

"""

num_test = dists.shape[0]

y_pred = np.zeros(num_test)

for i in range(num_test):

# A list of length k storing the labels of the k nearest neighbors to

# the ith test point.

closest_y = []

#########################################################################

# TODO: #

# Use the distance matrix to find the k nearest neighbors of the ith #

# testing point, and use self.y_train to find the labels of these #

# neighbors. Store these labels in closest_y. #

# Hint: Look up the function numpy.argsort. #

#########################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

index = np.argsort(dists[i, :])[0:k] #取距离前k近的向量放在index数组中

closest_y = list(self.y_train[index]) #这里运用了numpy的fancy indexing性质,直接传入对应的下标即可生成子数组

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

#########################################################################

# TODO: #

# Now that you have found the labels of the k nearest neighbors, you #

# need to find the most common label in the list closest_y of labels. #

# Store this label in y_pred[i]. Break ties by choosing the smaller #

# label. #

#########################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

y_pred[i] = Counter(closest_y).most_common(1)[0][0] #Counter(closest_y).most_common(1)返回的只是一个(int, int)的元组数组,每个元组中第一个存放出现次数最多的值,第二个存放该值出现的次数,所以要使用[0][0]来取到这个具体值

'''对于取一个数组中出现最多的值还可以写成:y_pred[i] = max(closest_y, key = closest_y.count)'''

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

return y_pred

我们回到作业根目录下的 knn.ipynb,运行一下前面的代码,应该一切正常。最后的部分是让我们编写交叉验证的代码,对于交叉验证的讨论在机器学习算法笔记(十八):验证数据集与交叉验证中已经进行过,这里不再重复。具体的思路是:首先把训练集和测试集分成 num_folds(交叉验证的折数,以下简称 n)份,再分别对每个待确定的 k 进行 n 折交叉验证(n-1 份作为训练集,剩下一份作为验证集),反复 n 次后将这 n 次验证的准确率保存在 k 键对应的数组中,直到把所有的 k 遍历完为止。

num_folds = 5

k_choices = [1, 3, 5, 8, 10, 12, 15, 20, 50, 100]

X_train_folds = []

y_train_folds = []

################################################################################

# TODO: #

# Split up the training data into folds. After splitting, X_train_folds and #

# y_train_folds should each be lists of length num_folds, where #

# y_train_folds[i] is the label vector for the points in X_train_folds[i]. #

# Hint: Look up the numpy array_split function. #

################################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

"""把训练集和测试集分成num_folds份"""

X_train_folds = np.array_split(X_train, num_folds, axis = 0)

y_train_folds = np.array_split(y_train, num_folds, axis = 0)

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

# A dictionary holding the accuracies for different values of k that we find

# when running cross-validation. After running cross-validation,

# k_to_accuracies[k] should be a list of length num_folds giving the different

# accuracy values that we found when using that value of k.

k_to_accuracies = {}

################################################################################

# TODO: #

# Perform k-fold cross validation to find the best value of k. For each #

# possible value of k, run the k-nearest-neighbor algorithm num_folds times, #

# where in each case you use all but one of the folds as training data and the #

# last fold as a validation set. Store the accuracies for all fold and all #

# values of k in the k_to_accuracies dictionary. #

################################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

for k in k_choices:

k_to_accuracies[k]=[]

for i in range(num_folds):

"""创建用于单次验证的训练集和验证集"""

X_temp = np.concatenate([X_train_folds[j] for j in range(num_folds) if j!=i]) #np.concatenate按轴把二维array合成一个新array,默认axis=0

y_temp = np.concatenate([y_train_folds[j] for j in range(num_folds) if j!=i])

"""把创建好的训练集和验证集进行训练与预测"""

classifier.train(X_temp, y_temp)

y_predict = classifier.predict(X_train_folds[i], k = k, num_loops = 0)

num_correct = np.sum(y_predict == y_train_folds[i]) #计算预测对的个数

k_to_accuracies[k].append(1.0*num_correct/len(y_train_folds[i])) #以预测对的个数除以这一轮fold包含数据的总个数,算出这一轮的准确率存在k键对应的数组值中

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

# Print out the computed accuracies

for k in sorted(k_to_accuracies):

for accuracy in k_to_accuracies[k]:

print('k = %d, accuracy = %f' % (k, accuracy))